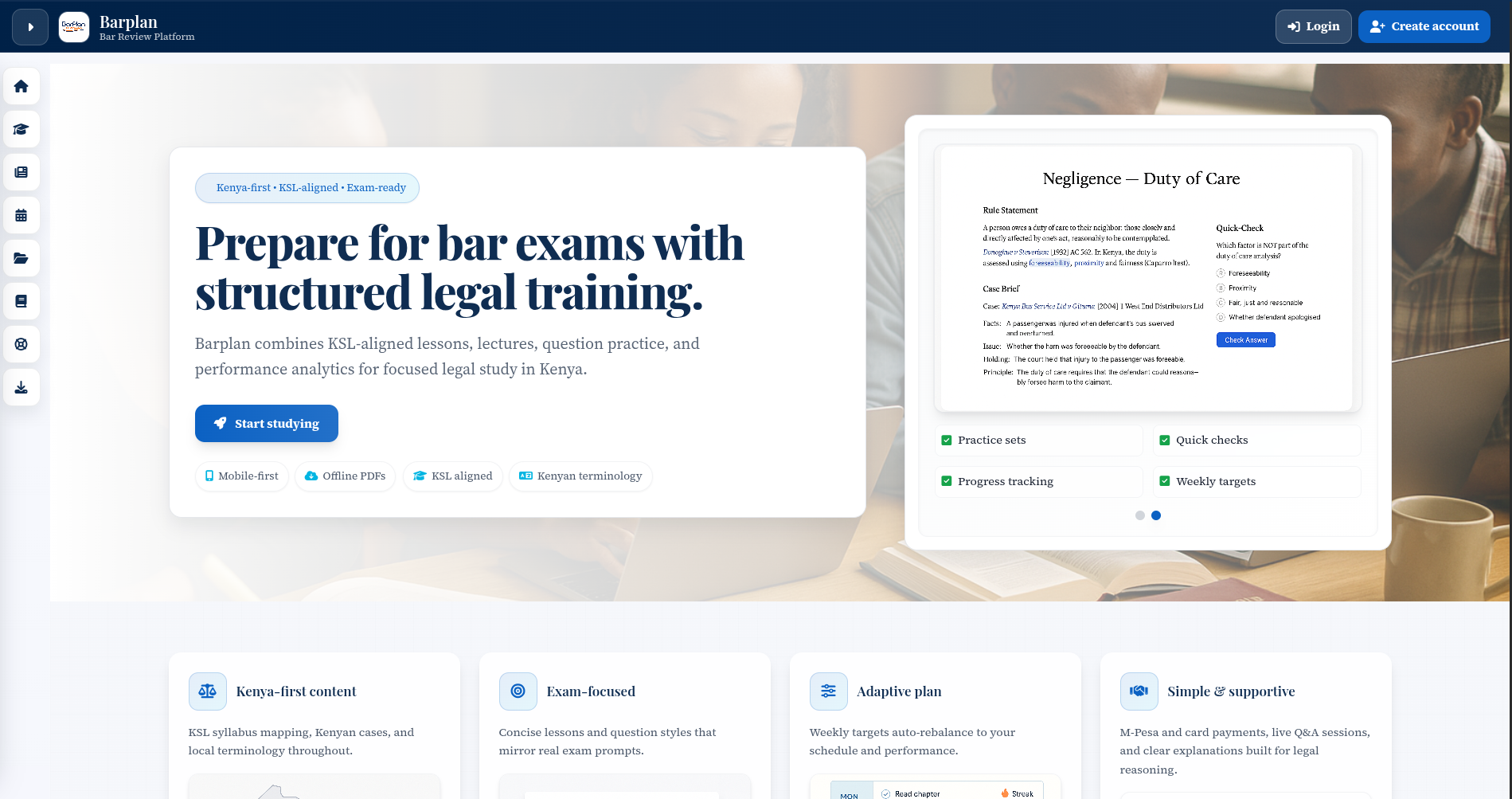

Barplan: From Curriculum Structure to Exam-Ready Practice Engine

Lewis Kiganjo

Product Engineer

Building Barplan: From Content Platform to Performance Engine

Most learning platforms fail in a very predictable way.

They deliver content.

They track progress.

They look complete.

But they don’t actually improve decisions.

The Starting Problem

Barplan wasn’t supposed to be just another legal learning platform. The objective was sharper:

Build a system that helps Kenyan legal learners prepare, perform, and adapt - not just consume.

That distinction changed everything.

Instead of asking “how do we deliver curriculum?”, the real question became:

- How does a student know what to study next?

- How do they simulate real exam pressure?

- How do they recover from weak areas efficiently?

That’s where the architecture began.

Structuring the Learning Domain

The first decision was non-negotiable: predictability over flexibility.

I mapped the entire domain into a strict hierarchy:

Program → Course → Module → Lesson

This wasn’t just about organization.

It ensured:

- Deterministic progress tracking

- Clear enrollment boundaries

- Reliable analytics aggregation

When a student progresses, the system knows exactly what that means, no ambiguity, no edge-case chaos.

Separating Practice from Execution

Most platforms blur practice generation and attempt execution into one messy layer, Barplan didn’t.

I split it into two distinct systems:

1. Set Builder

- Responsible for composing question sets

- Handles topic distribution, difficulty, and constraints

- Stateless by design

2. Attempt Engine

- Manages session lifecycle

- Tracks answers, timing, and submission

- Enforces grading logic

This separation made the system:

- Easier to maintain

- Easier to extend (e.g. adaptive sets later)

- Much more predictable under load

Designing for Exam Reality

Mock exams weren’t treated as a feature.

They were treated as a simulation system.

That meant enforcing constraints:

- Strict time limits (no pausing abuse)

- Auto-submit on timeout

- Mixed-topic coverage

- Immutable grading rules

The key insight:

If the system doesn’t create pressure, it doesn’t prepare the student.

The Planner Problem No One Solves

Study planners usually fail silently.

Tasks pile up. Students fall behind. The system says nothing.

Barplan took a different approach.

Task Reflow System

Instead of letting overdue tasks accumulate:

- Missed tasks are reassigned

- Future schedules are adjusted dynamically

- Workload is kept realistic

This turns the planner into something actionable, not aspirational.

Enrollment Without Friction

Another key decision:

Enrollment should cascade.

When a student enrolls in a program:

- They automatically gain access to all published courses

- No manual linking

- No fragmented onboarding

This reduced:

- Admin overhead

- Student confusion

- Setup time to near zero

Attempt State: Designed for Reality

Students don’t always finish what they start.

So the system accounts for that.

- Attempts are resumable

- Time continues running where applicable

- Auto-submit ensures fairness

No data loss. No loopholes.

Beyond Scores: Structured Error Intelligence

Raw scores are useless without context.

So instead of just storing results, the system tags errors as structured metadata:

- Topic-level weaknesses

- Pattern recognition across attempts

- Repeat mistake detection

This enabled something more valuable:

Diagnostic Analytics

Students can now see:

- Where they are failing

- Whether they are improving

- What to focus on next

Analytics Driven by Real Questions

Every dashboard was built from one principle:

What decision does this help the student make?

So instead of vanity metrics, the system surfaces:

- Progress — how far they’ve gone

- Trend direction — improving or declining

- Workload — what’s realistically ahead

No noise. Just actionable insight.

The Core Rule That Shaped Everything

Every feature had to pass one test:

Does this improve a student decision?

If not, it didn’t make it into the system.

The Outcome

What emerged wasn’t just a learning platform.

It became a dual-mode system:

1. Daily Learning Engine

- Structured content

- Guided progression

- Adaptive planning

2. Exam Simulation Layer

- Timed mocks

- Strict grading

- Real pressure conditions

All of it backed by:

- Clean architecture

- Clear separation of concerns

- Scalable operational design

Final Reflection

This wasn’t about building features.

It was about building feedback loops.

Because in the end:

- Content teaches

- Practice reveals

- Analytics guides

- Systems enforce discipline

And when those four align, students don’t just learn.

They perform.